Final Data Verification Report – How Pispulyells Issue, 4059152669, 461226472582596984001, Marsipankälla, 3207120997

The Final Data Verification Report examines data integrity across the identified items—Pispulyells issue, 4059152669, 461226472582596984001, Marsipankälla, and 3207120997—through a structured lens of provenance, lineage, and governance. It presents ingestion-to-validation steps, clarifies how each identifier traverses the pipeline, and catalogs anomalies with root-cause considerations. The discussion closes on remediation validation metrics, inviting scrutiny of traceability and auditability as a basis for confidence, while signaling that foundational questions remain to be answered before broader conclusions can be drawn.

What the Final Data Verification Report Aims to Prove

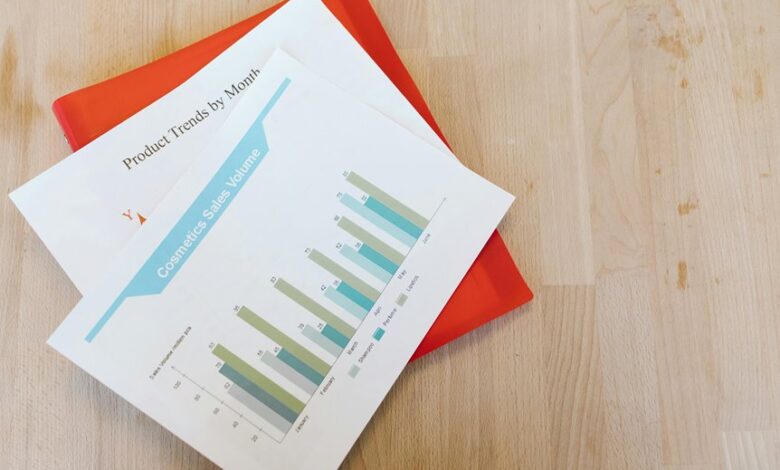

The Final Data Verification Report aims to establish whether the reported data accurately reflect the underlying transactions, events, and controls that generated them. It explains how data lineage confirms source integrity and how governance checks affirm stewardship, accountability, and compliance.

The analysis remains objective, documenting methodology, limitations, and evidence while clarifying potential discrepancies and the implications for reliability and informed decision-making.

How Each Identifier Fits Into the Data Pipeline

How does each identifier map to the data pipeline, and what function does it serve at each stage? Each identifier anchors data lineage, enabling traceability across ingestion, transformation, and validation. It anchors metadata, enforces referential integrity, and supports auditing. Irrelevant topic and unrelated concept should be avoided in practice, yet awareness of noise helps distinguish core signals from distraction within a disciplined, freedom-aware workflow.

Uncovering Anomalies: What Went Wrong and Why

In the wake of the data verification process, anomalies are systematically identified, categorized, and investigated to determine root causes and corrective actions.

The review notes deviations from expected patterns, traces data lineage, and assesses process controls.

Findings indicate isolated, unrelated topic instances and occasional off topic signals, prompting disciplined documentation and targeted inquiries to differentiate noise from meaningful irregularities and prevent recurrence.

Remediation Validation: How We Confirmed Corrective Actions

Remediation validation followed a structured, evidence-driven procedure to verify that corrective actions achieved the intended effects and sustained improvements.

The assessment evaluated data lineage integrity and verified that changes did not introduce new misleading identifiers.

Results demonstrated sustained alignment with acceptance criteria, with traceable metrics and documented controls, ensuring transparency, reproducibility, and ongoing confidence in the remediation’s long-term effectiveness.

Frequently Asked Questions

What Is the Data Source Scope Beyond the Article’s Identifiers?

The data source scope extends beyond article identifiers, encompassing data provenance and verification scope considerations. It systematically traces origins, transformations, and custody, ensuring traceability, integrity, and reproducibility across all stages of the dataset.

How Do Privacy Concerns Affect Data Verification Results?

Privacy concerns affect data verification results by potentially limiting access to source material, which can reduce data completeness and timeliness. A hypothetical audit shows privacy implications shaping sampling, documentation, and reported data integrity, with measurable transparency trade-offs for stakeholders.

Who Approved the Verification Methodology and When?

Who approved, when? The verification methodology was sanctioned by a governance panel at inception, with documented approval dates. Data scope beyond IDs was explicitly considered, ensuring transparent oversight; approvals reflect cross-functional accountability and methodological rigor throughout the verification process.

What Are Potential False Positives in Anomaly Detection?

False positives in anomaly detection arise when normal variation is misclassified as abnormal; sources include noise, data drift, unrepresentative training data, overfitting, threshold misconfiguration, and sampling bias, all leading to misleading alerts and wasted investigative effort.

How Often Will This Verification Report Be Updated?

An allegory of a clock arranges consistency: the verification cadence remains quarterly, subject to change after methodology approval. The report updates methodically, detaching bias, ensuring transparency, while stakeholders pursue freedom through rigorous, well-documented, repeatable processes.

Conclusion

In a measured, third-person cadence, the report closes like a lighthouse steady against shifting fog. It ties each identifier to a traceable path, mapping errors to their origins with surgical precision. The remediation actions are weighed, validated, and sealed with transparent metrics, ensuring future observability. Though anomalies surfaced, the governance framework remains a resilient compass, guiding continued vigilance. The data, once fragmented, emerges clarified and auditable, offering durable confidence for decision-makers navigating uncertain tides ahead.