Neural Node 945064200 Fusion Flow

Neural Node 945064200 Fusion Flow presents a modular, graph-based approach to integrating neural components. Each node is a configurable module with defined interfaces for routing, workload distribution, and parallelism across heterogeneous resources. The system emphasizes token-based data movement to balance localized computation with scalable inference. It offers edge-to-simulation capabilities while exposing governance and tooling needs. Those constraints imply a careful path toward reproducibility and optimization, leaving open questions about deployment maturity and operational trade-offs.

What Is Neural Node 945064200 Fusion Flow?

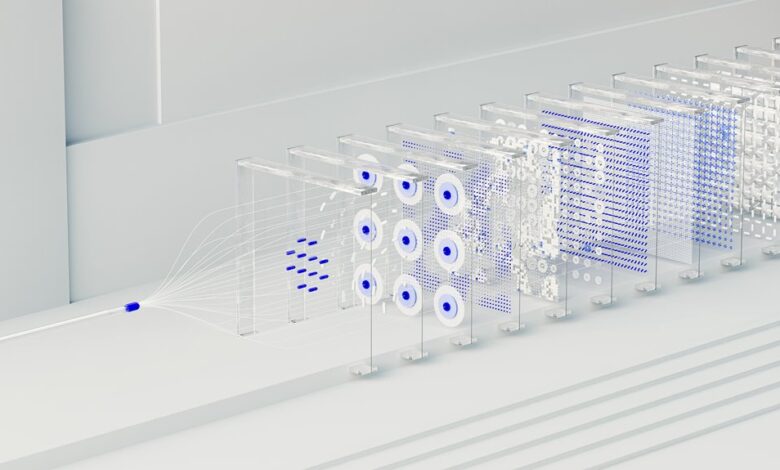

Neural Node 945064200 Fusion Flow refers to a structured process for integrating neural computational components into a unified functional pipeline. It examines a neural node as a module, where fusion flow behavior emerges from defined interfaces and constraints. The emphasis is on dynamic routing and parallelism, enabling coherent data movement, dependable aggregation, and scalable, modular computation within the system.

How Fusion Flow Orchestrates Dynamic Routing and Parallelism

Fusion Flow orchestrates dynamic routing and parallelism by mapping data as tokens through a graph of modular neural nodes, where each node exposes interfaces that guide routing decisions and workload distribution.

The design enables neural flow to flexibly reallocate tasks, optimize latency, and balance throughput.

Fusion routing emerges from localized policies, while dynamic orchestration coordinates parallelism strategies across heterogeneous resources.

Practical Edge Inference to Large-Scale Simulations With Fusion Flow

Practical edge inference enables real-time, resource-constrained decisions to scale toward large-scale simulations by leveraging Fusion Flow’s modular neural nodes. The neural node framework supports dynamic routing and parallelism, enabling localized computation with minimal bandwidth. Deployment challenges persist, but disciplined architecture and monitoring yield robust performance; next steps emphasize reproducibility, optimization, and scalable tooling for broader adoption and insight extraction.

Overcoming Challenges and Next Steps for Deployment

Despite notable progress, deployment remains constrained by integration complexity, data governance, and resource heterogeneity. The discussion highlights conceptual challenges and operational gaps, emphasizing a disciplined approach to a deployment roadmap. A structured sequence of milestones clarifies risk, validation, and governance, while preserving autonomy. Ultimately, success requires measurable criteria, cross-domain alignment, and iterative refinement across teams, tools, and data interfaces.

Conclusion

Neural Node 945064200 Fusion Flow enables modular, scalable neural orchestration across edge and cloud. Its token-driven graph and configurable routing create localized compute with coherent global coordination. An anecdote: a factory floor once burdened by monolithic inference now breathes through pipelines that redirect tasks like trains on a switchyard, improving throughput without overhauling hardware. In data terms, dynamic routing reduces latency while preserving accuracy, illustrating a disciplined path from isolated modules to integrated, reproducible, large‑scale simulations.